Hi Peter, I have a similar problem since month’s now. During night is a bad time for investigating such issues. For this reason and the bad weather conditions I still have not found a solution. Can you please make an experiment?

For polar alignment and plate solving I just switch to my guiding camera and the guide scope before I start. You must always change both the camera and the telescope, otherwise the things can’t work. Please check if it’s working for you too when you are using not a DSLR. In my case it’s working fine then. This is okay for polar alignment but not so helpful for framing the target. I’m always trying to make the guide scope pointing to the same position as the primary scope. It’s just a workaround until we have found the reason for this issue. Please let me know if it’s working for you too when no DSLR is used. In this case we are in the same boat… hopefully this helps to find this soon.

Read More...

Hello Wolfgang,

Meanwhile I'm storing my images locally, just because I get the benefit that the latest image is always shown in the image preview inside the manager and I want to see if the conditions changed, a very important requirement in this cloudy summer (I'm sitting inside my garden house when all setup is done and don't see the clouds outside). I'm now connecting kstars remotely over WLAN to my Raspberry PI using Astroberry. However, in the past I worked very differently.

1.) Using kstars for the first time I was running it directly on the RPI and I was using a VNC connection to control it. My images were stored on the camera SD card and on a USB stick attached to the RPI (to avoid writing to the SD card of the RPI). The WLAN hotspot of the RPI was not so stable so I decided to improve my WLAN in the garden with a repeater.

2.) Now using my home WLAN it was better to run kstars remotely on a small MacBook Air, because I wanted to reduce processing on the RPI. However, I also wanted to avoid guiding issues due to WLAN instabilities. For this reason I used again PHD2 for guiding on the RPI and to avoid too much WLAN traffic leading perhaps to some instability I stored the images only remotely on the RPI. This helps a lot if the WLAN is corrupted by other signals, because you have only very small network traffic. Despite this I could still do a capture and solve to get a better framing for my target. These days this feature still worked for me...

The downside of this second approach is that you can see only the last preview inside the preview window if you disabled the external fits viewer window. After some nights without no WLAN issues I decided to store the images locally and also remotely on the USB stick attached to the RPI. I just wanted to be sure that all images I captured are at least stored on the USB stick. Its also more easy to put the stick on your desktop machine later to do the processing. The decision to store locally was done especially to see what's going on. I also had some issues with the dithering, because the default dithering offset was much to less (walking pattern noise in all images despite dithering, no noticable offset between the images).

Finally, now I'm not storing the images remotely anymore, because when the WLAN is stable enough and you are also downloading the images locally, this just does not make so much sense. However, when there are nights where WLAN signals in the neighborhood are interacting with my own WLAN and it occurs an image of 300-480s exposure gets lost I would switch on storing the images remotely and/or avoid storing it locally. I don't really like this workflow, but I cannot use a cable for this connection. I'm not so really convinced that this would help so much in this case, but at least I would give it a trial. Not sure what will happen with the entire system when the connection drops from time to time, maybe it would not really help. However, if my WLAN would be really slow then I would really prefer storing the images only remotely, 38MB now take about 9s to transfer - longer than 30s would be unacceptable - too much can happen during this time.

Regards,

Jürgen

Read More...

Hello everyone,

I just wanted to share some information. In the last weeks I struggled with compiling kstars from source on my M1 Mac. For me this is a little bit more complicated, because I'm developing apps for iOS and during the summer months I always use the latest betas for Xcode and macOS, so even if any script will work it is not guaranteed it will work on my machine and all libraries are there. Furthermore it feels more natural for me to build such projects on linux to avoid introducing new problems.

To make things short: I don't want to buy a new parallels license again, because I don't want to use Windows anymore for private purposes and the price is too expensive (would need to pay the full price again). I discovered UTM for Mac, where you can run any ARM64 Windows or Linux. However, it did not work due to some memory and network issues - the virtual machine was always loosing the connection to the network after some short time. Unfortunately, I also did not have any other machine to install linux and as you might know, there is still no bootcamp on M1 Macs yet.

For those who are in the same situation wanting to have everything on a separate linux machine:

UTM releases

Use the latest beta (2.2.1). It's nearly stable, seems to have no memory leaks and you can now configure to share your network, so the connection will be always available. I installed ubuntu arm64 on it, qt and all libraries (a lot of library names did change, there is a need to check these scripts), however I finally got all libraries required for kstars and now finally the kstars build finished successfully, yeah!!! smile.png

I thought it would be a good idea to share this with you, maybe others have also switched from Intel Macs to the new Apple Silicon Macs or want to switch in the near future. UTM seems to be worth a trial, because it's really fast and also easy to setup a VM.

Hope this helps somebody to save time.

Jürgen

Read More...

Did another research during daylight. I captured an image with different exposures - without any stars of course - and could see some images. But I also got a message that the image could not be downloaded (unspecific error) - this happened very often. I also tested using GPhoto driver. I will retry it in the next cloudless night using GPhoto, because this Is just something I did not try yet.

Read More...

I already installed KStars twice on my Mac to resolve nearly the same issue, but it did not help. Maybe you have more success.

Read More...

Go to your home folder in the finder and press Shift+Cmd + . to see all hidden files. All you need is in

~/Library/Application Support/kstars

Here you find the folder gsc containing the GSC catalogue, folder darks and defectmaps containing any captured dark frame information, astrometry containing all the index files and analyze with the analyze files from your last sessions. Also your settings database in userdb.sqlite including a backup file from this (and you should store this file as a backup somewhere else to have another copy if you need it later). Don't remove other files from here unless you plan a reinstallation.

Read More...

Hi Jasem,

the screenshot was made using ISO 1600 and 15sec, but I also tried ISO 6400 and up to 60secs (would have entered longer exposure time, but 60sec seems to be the limit - makes some sense for unguided imaging).

Read More...

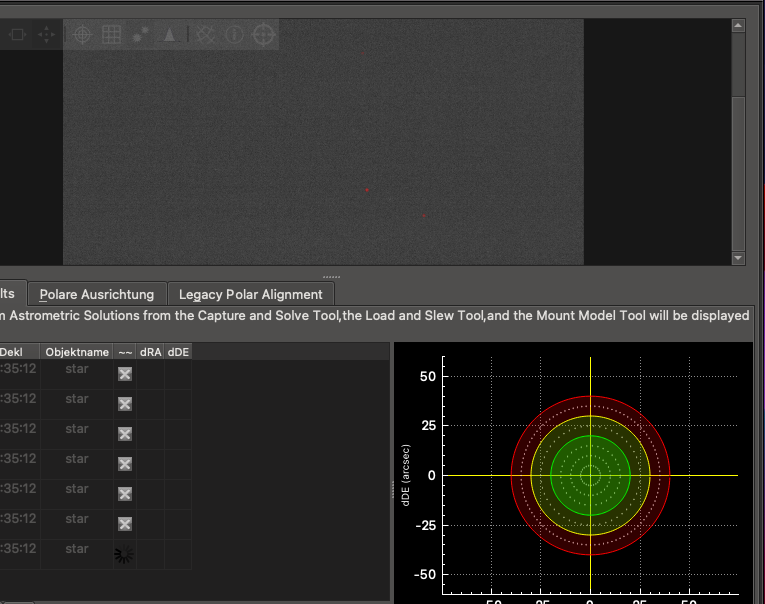

Okay, in the simulator images could be solved, but this does not help at all! Using a real sky and real devices I still have problems. Yesterday night it took me 3 hours to test several things. I tried running KStars directly on the RPI4 and I tried KStars connected remotely to the RPI4 and detected a lot of new issues and finally capture & solve did still not work.

When I'm trying to capture & solve using my Canon EOS 700D it always fails - on the RPI4 and also via remote connection. And as I mentioned earlier, I can not see any star inside the image shown during capture and solve!!!!

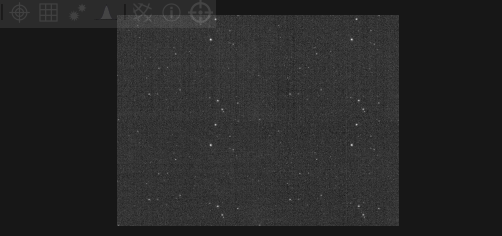

When I capture a preview image with the same exposure time I always have stars in the image and the stars are also in my final images when I come so far that I can run capturing session. However, as you can see, there is not any star in the image visible. I retried this with different exposure times up to 60 seconds and never had any star visible!! There is no filter attached to the camera. Taking the same image from the capture module as a preview image contains stars. This is the reason - just no stars, but only here. As if the module is just taking dark frames (and no: I did not forget to remove any lens coverage

However, this is not enough. In the hope, that I can benefit from some fixes I also updated my RPI4 Astroberry to the latest version yesterday. Another big mistake. The update did make it more worse. Now, I also cannot capture and solve anymore using my ASI ZWO 120mm Mini Mono guiding camera. The reason here: I get stars, but the image is only half and the other side repeats the same star pattern again. Sometimes this orientation is horizontal, sometimes its vertical repeating (but this can be just a preview effect, so the image might be rotated sometimes). You can see it here:

The same effect occurs also when using KStars on the RPI4. Sometimes it helps to restart the INDI Server, just restarting the ZWO driver did not have the same effect. But if all that does not help it always helped to reboot the RPI4 (its Windows, you need to reboot...

It smells like a bug, at least I'm running out of ideas. What can I do to provide more information to fix this?

A short part of the log for the Canon issue:

021-08-20T22:14:19 Solver Failed.

2021-08-20T22:14:19 Child solver: 1 did not solve or was aborted

2021-08-20T22:14:19 Child solver: 4 did not solve or was aborted

2021-08-20T22:14:19 Child solver: 3 did not solve or was aborted

2021-08-20T22:14:19 Child solver: 2 did not solve or was aborted

2021-08-20T22:14:19 Starting Internal StellarSolver Astrometry.net based Engine with the 1-Default profile. . .

2021-08-20T22:14:19 +++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

2021-08-20T22:14:19 Image width: 5208 pixels, Downsampled Image width: 1736 pixels; arcsec per pixel range: 210.056 to 373.272

2021-08-20T22:14:19 Scale range: 101.294 to 180 degrees wide

2021-08-20T22:14:19 Starting Internal StellarSolver Astrometry.net based Engine with the 1-Default profile. . .

2021-08-20T22:14:19 +++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

2021-08-20T22:14:19 Image width: 5208 pixels, Downsampled Image width: 1736 pixels; arcsec per pixel range: 93.4735 to 210.056

2021-08-20T22:14:19 Scale range: 45.075 to 101.294 degrees wide

2021-08-20T22:14:19 Starting Internal StellarSolver Astrometry.net based Engine with the 1-Default profile. . .

2021-08-20T22:14:19 +++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

2021-08-20T22:14:19 Image width: 5208 pixels, Downsampled Image width: 1736 pixels; arcsec per pixel range: 0.207373 to 23.5239

2021-08-20T22:14:19 Scale range: 0.1 to 11.3438 degrees wide

2021-08-20T22:14:19 Starting Internal StellarSolver Astrometry.net based Engine with the 1-Default profile. . .

2021-08-20T22:14:19 +++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

2021-08-20T22:14:19 Image width: 5208 pixels, Downsampled Image width: 1736 pixels; arcsec per pixel range: 23.5239 to 93.4735

2021-08-20T22:14:19 Scale range: 11.3438 to 45.075 degrees wide

2021-08-20T22:14:19 Solver # 4, Low 101.294, High 180 arcminwidth

2021-08-20T22:14:19 Solver # 3, Low 45.075, High 101.294 arcminwidth

2021-08-20T22:14:19 Solver # 2, Low 11.3438, High 45.075 arcminwidth

2021-08-20T22:14:19 Solver # 1, Low 0.1, High 11.3438 arcminwidth

2021-08-20T22:14:19 Starting 4 threads to solve on multiple scales

2021-08-20T22:14:19 Stars Found after Filtering: 6

2021-08-20T22:14:19 Stars Found before Filtering: 6

2021-08-20T22:14:19 Starting Internal StellarSolver Sextractor with the 1-Default profile . . .

2021-08-20T22:14:19 +++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

2021-08-20T22:14:19 There should be enough RAM to load the indexes in parallel.

2021-08-20T22:14:19 Note: Free RAM for now is reported as Installed RAM on MacOS until I figure out how to get available RAM

2021-08-20T22:14:19 Evaluating Installed RAM for inParallel Option. Total Size of Index files: 0.13579 GB, Installed RAM: 8 GB, Free RAM: 8 GB

2021-08-20T22:14:19 Automatically downsampling the image by 3

2021-08-20T22:14:19 Bild erhalten.

2021-08-20T22:16:24 Solver Failed.

2021-08-20T22:16:24 Child solver: 3 did not solve or was aborted

2021-08-20T22:16:24 Child solver: 2 did not solve or was aborted

2021-08-20T22:16:24 Child solver: 4 did not solve or was aborted

2021-08-20T22:16:24 Starting Internal StellarSolver Astrometry.net based Engine with the 4-SmallScaleSolving profile. . .

2021-08-20T22:16:24 +++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

2021-08-20T22:16:24 Image width: 5208 pixels, Downsampled Image width: 1736 pixels; arcsec per pixel range: 5.33986 to 11.7555

2021-08-20T22:16:24 Scale range: 2.575 to 5.66875 degrees wide

2021-08-20T22:16:24 Child solver: 1 did not solve or was aborted

2021-08-20T22:16:24 Starting Internal StellarSolver Astrometry.net based Engine with the 4-SmallScaleSolving profile. . .

2021-08-20T22:16:24 +++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

2021-08-20T22:16:24 Image width: 5208 pixels, Downsampled Image width: 1736 pixels; arcsec per pixel range: 11.7555 to 20.7373

2021-08-20T22:16:24 Scale range: 5.66875 to 10 degrees wide

2021-08-20T22:16:24 Starting Internal StellarSolver Astrometry.net based Engine with the 4-SmallScaleSolving profile. . .

2021-08-20T22:16:24 +++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

2021-08-20T22:16:24 Starting Internal StellarSolver Astrometry.net based Engine with the 4-SmallScaleSolving profile. . .

2021-08-20T22:16:24 +++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

2021-08-20T22:16:24 Image width: 5208 pixels, Downsampled Image width: 1736 pixels; arcsec per pixel range: 0.207373 to 1.4905

2021-08-20T22:16:24 Scale range: 0.1 to 0.71875 degrees wide

2021-08-20T22:16:24 Image width: 5208 pixels, Downsampled Image width: 1736 pixels; arcsec per pixel range: 1.4905 to 5.33986

2021-08-20T22:16:24 Scale range: 0.71875 to 2.575 degrees wide

2021-08-20T22:16:24 Solver # 4, Low 5.66875, High 10 arcminwidth

2021-08-20T22:16:24 Solver # 3, Low 2.575, High 5.66875 arcminwidth

2021-08-20T22:16:24 Solver # 2, Low 0.71875, High 2.575 arcminwidth

2021-08-20T22:16:24 Solver # 1, Low 0.1, High 0.71875 arcminwidth

2021-08-20T22:16:24 Starting 4 threads to solve on multiple scales

2021-08-20T22:16:24 Stars Found after Filtering: 5

2021-08-20T22:16:24 Keeping just the 50 brightest stars

2021-08-20T22:16:24 Removing the stars with a/b ratios greater than 1.5

2021-08-20T22:16:24 Stars Found before Filtering: 5

2021-08-20T22:16:24 Starting Internal StellarSolver Sextractor with the 4-SmallScaleSolving profile . . .

2021-08-20T22:16:24 +++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

2021-08-20T22:16:24 There should be enough RAM to load the indexes in parallel.

2021-08-20T22:16:24 Note: Free RAM for now is reported as Installed RAM on MacOS until I figure out how to get available RAM

2021-08-20T22:16:24 Evaluating Installed RAM for inParallel Option. Total Size of Index files: 0.13579 GB, Installed RAM: 8 GB, Free RAM: 8 GB

2021-08-20T22:16:24 Automatically downsampling the image by 3

2021-08-20T22:16:24 Bild erhalten.

2021-08-20T22:15:51 Bild wird aufgenommen ...

2021-08-20T22:18:19 Solver Failed.

2021-08-20T22:18:19 Child solver: 4 did not solve or was aborted

2021-08-20T22:18:19 Child solver: 2 did not solve or was aborted

2021-08-20T22:18:19 Child solver: 3 did not solve or was aborted

2021-08-20T22:18:19 Starting Internal StellarSolver Astrometry.net based Engine with the 4-SmallScaleSolving profile. . .

2021-08-20T22:18:19 Starting Internal StellarSolver Astrometry.net based Engine with the 4-SmallScaleSolving profile. . .

2021-08-20T22:18:19 +++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

2021-08-20T22:18:19 +++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

2021-08-20T22:18:19 Image width: 5208 pixels, Downsampled Image width: 1736 pixels; arcsec per pixel range: 1.4905 to 5.33986

2021-08-20T22:18:19 Child solver: 1 did not solve or was aborted

2021-08-20T22:18:19 Image width: 5208 pixels, Downsampled Image width: 1736 pixels; arcsec per pixel range: 11.7555 to 20.7373

2021-08-20T22:18:19 Scale range: 0.71875 to 2.575 degrees wide

2021-08-20T22:18:19 Scale range: 5.66875 to 10 degrees wide

2021-08-20T22:18:19 Starting Internal StellarSolver Astrometry.net based Engine with the 4-SmallScaleSolving profile. . .

2021-08-20T22:18:19 +++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

2021-08-20T22:18:19 Image width: 5208 pixels, Downsampled Image width: 1736 pixels; arcsec per pixel range: 5.33986 to 11.7555

2021-08-20T22:18:19 Scale range: 2.575 to 5.66875 degrees wide

2021-08-20T22:18:19 Starting Internal StellarSolver Astrometry.net based Engine with the 4-SmallScaleSolving profile. . .

2021-08-20T22:18:19 +++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

2021-08-20T22:18:19 Image width: 5208 pixels, Downsampled Image width: 1736 pixels; arcsec per pixel range: 0.207373 to 1.4905

2021-08-20T22:18:19 Scale range: 0.1 to 0.71875 degrees wide

2021-08-20T22:18:19 Solver # 4, Low 5.66875, High 10 arcminwidth

2021-08-20T22:18:19 Solver # 3, Low 2.575, High 5.66875 arcminwidth

2021-08-20T22:18:19 Solver # 2, Low 0.71875, High 2.575 arcminwidth

2021-08-20T22:18:19 Solver # 1, Low 0.1, High 0.71875 arcminwidth

2021-08-20T22:18:19 Starting 4 threads to solve on multiple scales

2021-08-20T22:18:19 Stars Found after Filtering: 3

2021-08-20T22:18:19 Keeping just the 50 brightest stars

2021-08-20T22:18:19 Removing the stars with a/b ratios greater than 1.5

2021-08-20T22:18:19 Stars Found before Filtering: 3

2021-08-20T22:18:19 Starting Internal StellarSolver Sextractor with the 4-SmallScaleSolving profile . . .

2021-08-20T22:18:19 +++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

2021-08-20T22:18:19 There should be enough RAM to load the indexes in parallel.

2021-08-20T22:18:19 Note: Free RAM for now is reported as Installed RAM on MacOS until I figure out how to get available RAM

2021-08-20T22:18:19 Evaluating Installed RAM for inParallel Option. Total Size of Index files: 0.13579 GB, Installed RAM: 8 GB, Free RAM: 8 GB

2021-08-20T22:18:19 Automatically downsampling the image by 3

2021-08-20T22:18:19 Bild erhalten.

2021-08-20T22:17:34 Bild wird aufgenommen ...

Read More...

Thank you for your explanations. I'm happy to hear there are some further improvements planned and I will be happy to assist you. I think the most important problem with the UI is simply the fact that all supported guiders have slightly different logic and behavior and this results in many different settings which can be found at different places in the UI. I did not try GPG Guiding yet, my polar alignment seems to be very good in DEC now but there are still smaller corrections in DEC. But I will give this a trial some day just to get some experience with it.

Regards,

Jürgen

Read More...

I really like the internal guider, especially the MultiStar guiding. However, I have had mixed experiences with the guiding quality. It works, but I cannot really control the guiding quality, because there are a lot of unknown variables:

- the guiding pulse duration

- the proportional gain

Both are very technical aspects of the guiding mechanics behind the scene. I cannot really describe the problem with all this - it just feels less natural from the thinking I'm doing. On the other hand it seems that my NEQ6 introduces some problems, at least with pulse guiding. Until now, I'm back to PHD2 using an ST4 cable. Not because the internal guider is not good enough, but I'm still new to KStars/Ekos and the best results I got with the ST4 cable and PHD2 - here I get a nearly flat graph, if I have a good polar alignment and the seeing conditions are not so bad. And I have some easy to understandable controls to control aggressiveness and hysteresis for both axes. These are things I just can bring into relation to what I want, the hysteresis for overcompensations and the aggressiveness for reactiveness. Thinking in pulse duration (when I not even know how long the pulse should go) and a proportion factor is much harder to express how I want to control the curve, it's a less mathematical and more technical perspective of nearly the same thing. What I'm missing from PHD2 guiding with Ekos is the visible feedback from the camera, it is really soothing to see the stars matched inside their boxes - so if I would be able to have better control over the internal guider I really would prefer using it (needless to say: not having the control over it is just a user feeling ![]() ).

).

I would really appreciate if we could see some improvements in the UI and perhaps the logic of the internal guider in KStars 3.6 or so - understanding that it is not as easy as it might seem, but good things might take a while.

Read More...

Basic Information

-

Gender

Male -

Birthdate

06. 01. 1972 -

About me

Skywatcher NEQ6

60mm/240mm Guide Scope

80/600 Refractor

Canon EOS 700Da

Contact Information

-

City / Town

Halle/Saale -

Country

Germany